Google Hackathon x Machine Talents

Building an AI-powered symptom triage agent with two friends in three hours at a Google hackathon — the chain-of-agents architecture, late-night pivots, and lessons learned.

Key Takeaways

- In a 3-hour Google hackathon hosted by Machine Talents, our team of three shipped an AI-powered symptom triage agent end to end.

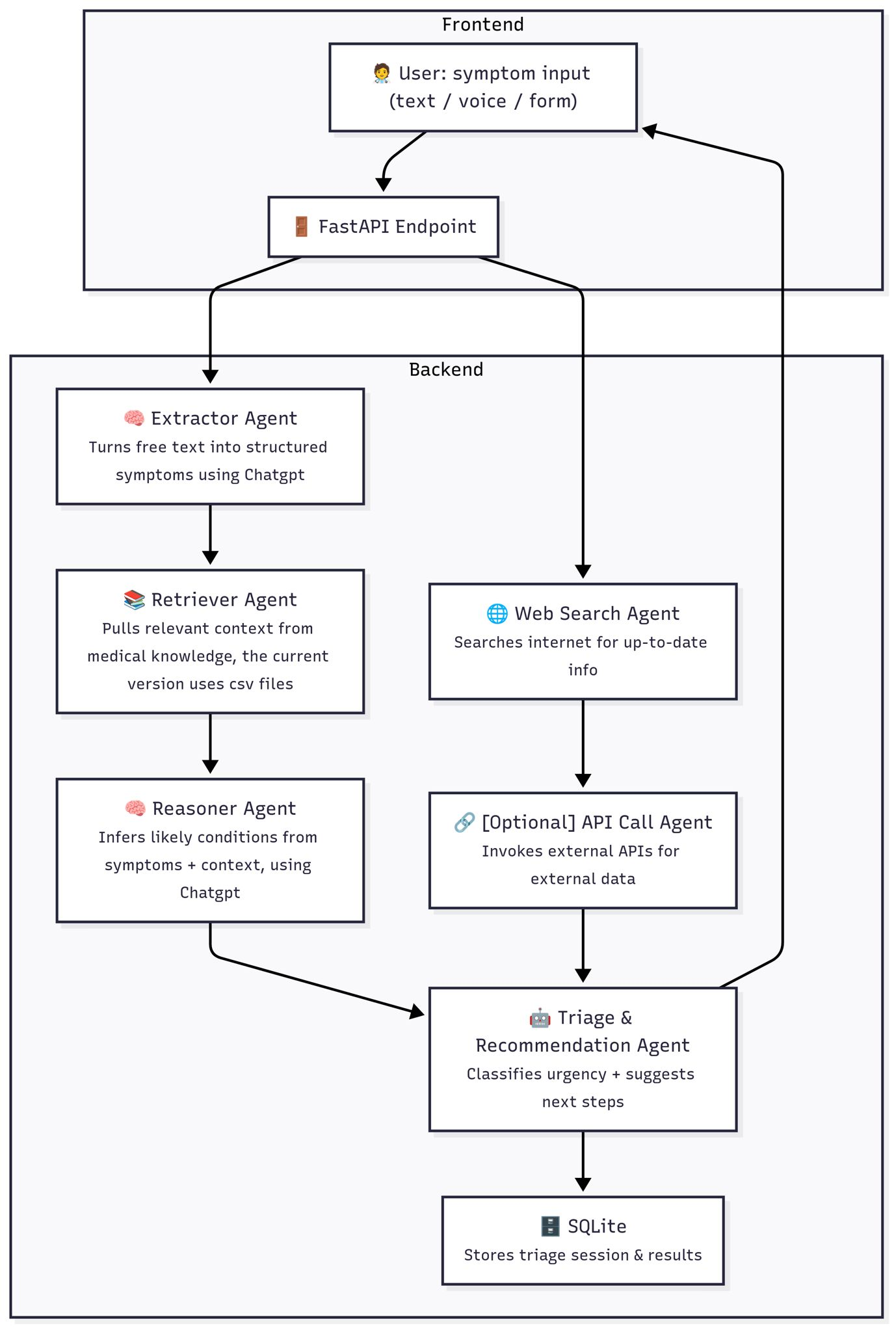

- Architecture: a chain of specialised agents — Extractor, Retriever, Reasoner, Web Search, and Triage — each doing one narrow task before handing off.

- Stack: Next.js frontend, FastAPI backend, SQLite storage, with the medical knowledge base built from CSV symptom-to-disease tables.

Have you ever wondered what it's like to be in a room full of brilliant minds, fueled by caffeine, dealing with time constraints and a shared passion for solving real-world problems? Well that was me in the Google Hackathon. In this post, I'm going to take you on the rollercoaster journey of how my team and I built an AI-powered diagnosis agent, from a simple idea to a working prototype, all within barely 3 hours.

How did the hackathon team form?

The hackathon started with a flurry of excitement and a bit of chaos. My friend Mohammed called me up and asked me if I wanted to do a Google hackathon, and immediately I was hooked, and I said yes. He already had our other teammate on board, called Tom Lam. We made a WhatsApp group chat and immediately spent the first few hours brainstorming, scribbling ideas until we landed on our project that we thought we were going to do. Until the very last day before the hackathon, a message was sent to all participants of a hackathon challenge to make an AI-powered symptom triage tool, and then we decided that was the challenge we were going to tackle in the hackathon. So then we started from scratch again with ideas. Tom Lam, being the cracked dev, made a roadmap of the technology for both backend and frontend, and with that we started researching the technologies and tools we were going to use at the hackathon.

What was the technical plan?

With a clear idea and a tight deadline, we knew we had to be strategic. We mapped out our technical approach, which became our guide for the entire hackathon. Our goal was to create a "chain" of AI agents, each with a specific task, to process user input and deliver a reliable diagnosis.

Here’s a breakdown of the roadmap we followed:

Frontend Interaction: It all started with the user. They could input their symptoms through text, voice, or a structured form on our Next.js frontend. This information was then sent to our backend.

FastAPI Endpoint: Our backend that was built with FastAPI, served as the central hub. It received the raw data from the frontend and initiated the AI agent workflow.

The Agent Chain in Action:

- Extractor Agent: The first agent in the chain was the Extractor Agent. Its job was to take the user's free-form text and turn it into structured data, identifying key symptoms, duration, and severity.

- Retriever Agent: Next, the Retriever Agent pulled relevant context from our medical knowledge base, which we built from CSV files containing symptom-to-disease information.

- Reasoner Agent: The structured data and the retrieved context were then passed to the Reasoner Agent. This agent's role was to infer likely conditions from the combined information.

External Data Integration (The Green Loop):

- Web Search Agent: To ensure our information was up-to-date, we incorporated a Web Search Agent. This agent searched the internet for the latest medical information related to the user's symptoms.

- API Call Agent (Optional): We also planned for an optional API Call Agent that could invoke external APIs for additional data, though we focused on the core agents during the hackathon.

Final Triage and Storage:

- Triage & Recommendation Agent: The outputs from all previous agents were fed into the final Triage & Recommendation Agent. This agent classified the urgency of the situation and suggested the next steps for the user.

- SQLite Database: Finally, the results of the triage session were stored in a SQLite database for future reference and analysis.

What did the agent roadmap look like?

What happened during the 3-hour build?

The energy in the room was electric from the moment we arrived. After a quick check-in at 5:15 PM, we found our spot and got settled. The welcome talk, which started around 5:30 PM, was more than just an introduction; the host mentioned technologies from Google that were going to be helpful in the hackathon. Also, the host and guest representatives from several companies presented a series of challenges for us to tackle, with the winners receiving a cash prize. This was the perfect motivation for what was to come.

Due to the extended welcome and challenge presentations, the hackathon's coding session didn't officially begin until later, leaving us with only about two and a half hours to bring our project to life. With the clock ticking, we dived headfirst into our work. The pressure was immense, but our team synergy was incredible. I and Tom are in the backend, setting up the API endpoints with Python and FastAPI. Simultaneously, our frontend developer, Mohammed, brought our vision to life with Next.js, creating a sleek and intuitive chat interface. My role was to wrangle the AI agents working on the web search agents and also working on the main.py, bringing all the agents and fine-tuning it to understand medical symptoms. Tom worked on the scripting of the data of the CSV file and also contributed to AI agents as well. Every minute counted, and the room was buzzing with a mix of focused collaboration and intense pressure.

At 9:00 PM, it was "pencils down" and time for the demo pitches. It was nerve-wracking to present our AI Diagnosis Agent after such a short development time, but we were proud of what we had built. During the demo stage I was hesitant to present our demo because there were still some features we didn't complete, but Mohammed prompted us to present, and we eventually did when it came our turn. We gave a live demo, showing how a user could describe their symptoms to our chatbot and receive a preliminary diagnosis and recommended next steps. We highlighted the potential of our project to help people get initial health guidance quickly and easily.

By 10:00 PM, the event was over. It was a whirlwind of activity, but in just a few short hours, we had taken an idea and turned it into a tangible product. We left feeling exhausted but also incredibly accomplished and excited about what we had created as a team.

What did we take away from the hackathon?

Picture

This is me at the very left and three of my friends at the right beside me. The person next to me, who is called Caleb Areeveso, was a hackathon winner who, by himself, was a hackathon winner and got a whopping £1500. A CRACKED A DEV IF I SAY SO MYSELF!!! The person next to Caleb was my teammate Mohammed Ali, and on the very right was another good friend, Elis Hewes, who was in another team in the hackathon.

Reflection:

While we didn't win the grand prize, we learnt the value of teamwork, adaptability and working under pressure. It was amazing to see how three friends could come together and create something so impactful. I also learnt a lot about natural language processing and how to build a full-stack application from the ground up.

I do have to say because of the time constraint we weren't able to make a Retriever Agent Data Source, API Call Agent and SQLite Database that we originally intended. We could have also refined our code to improve on what we have done.

Conclusion:

This hackathon was an unforgettable experience. It was a whirlwind of learning, building, and connecting with amazing people. If you're a student or anyone interested in tech, I can't recommend participating in a hackathon enough. You'll be amazed at what you can create in a single day. A huge thank you to my teammates, the organizers of Machine Talents, and all the other people in the hackathon.

Friends:

Elis Hewes: https://www.linkedin.com/in/ehewes/

Mohammed Ali: https://www.linkedin.com/in/mohammed-ali-52ba71242/

Tom Lam: https://www.linkedin.com/in/tom-kh-lam/

Caleb Areeveso: https://www.linkedin.com/in/caleb-areeveso/

Josh Alliet: https://www.linkedin.com/in/jalliet/

Related

- ScopeGuard: A Scope-Creep Radar for Client Projects — what I learned about chaining AI agents from this hackathon, applied to client work.

- Staying Ahead: Why Learning Is the Core Skill for Software Engineers — hackathons are one of the fastest ways to compress a full year of learning into one weekend.

Frequently asked questions

- What was the Google x Machine Talents hackathon?

- It was a short-form AI hackathon hosted by Machine Talents in partnership with Google, where teams had roughly 3 hours of coding time after a welcome session and challenge briefings. The featured challenge was to build an AI-powered symptom triage tool, and finalists pitched live demos at 9pm to a panel of company sponsors.

- What did your team build at the hackathon?

- An AI-powered symptom triage agent. A user describes their symptoms by text, voice, or form on a Next.js frontend; a FastAPI backend runs the input through a chain of agents — Extractor, Retriever, Reasoner, Web Search, and Triage — and returns a preliminary diagnosis plus recommended next steps.

- What is a chain-of-agents architecture?

- A chain-of-agents architecture splits a complex AI task into several specialised agents that each do one narrow job and pass structured output to the next. In our case the chain was symptom extraction → context retrieval → condition reasoning → web-validated check → urgency triage, with deterministic glue code between each step.

- What stack did you use?

- Next.js for the frontend chat interface, Python with FastAPI for the backend API, a CSV-derived medical knowledge base for the Retriever agent, and SQLite for storing triage sessions. Each agent was a separate Python module wired into a main.py orchestration entry point.

- What did you learn from the 3-hour build?

- The biggest lesson was that scope discipline beats ambition under time pressure. We had planned an API Call agent and a richer SQLite schema and shipped neither — the working version of the chain we did finish was more useful than a half-built fuller version. Hackathons reward whatever runs.